Getting traffic to an ecommerce store is only part of the work. Growth becomes more consistent when the store improves its ability to turn visits into purchases. This is why A/B testing plays such an important role in ecommerce conversion rate optimization.

Instead of relying on assumptions about what customers prefer, brands can test different versions of pages and elements to understand what actually leads to more clicks, more add-to-carts, and more completed orders.

In practice, A/B testing helps ecommerce teams make better decisions about copy, design, user experience, and purchase flow. It gives structure to optimization efforts and makes conversion improvement a repeatable process rather than a series of isolated changes.

When used well, it supports both better user experience and better commercial performance.

What Is A/B Testing and Why It Matters for Ecommerce CRO

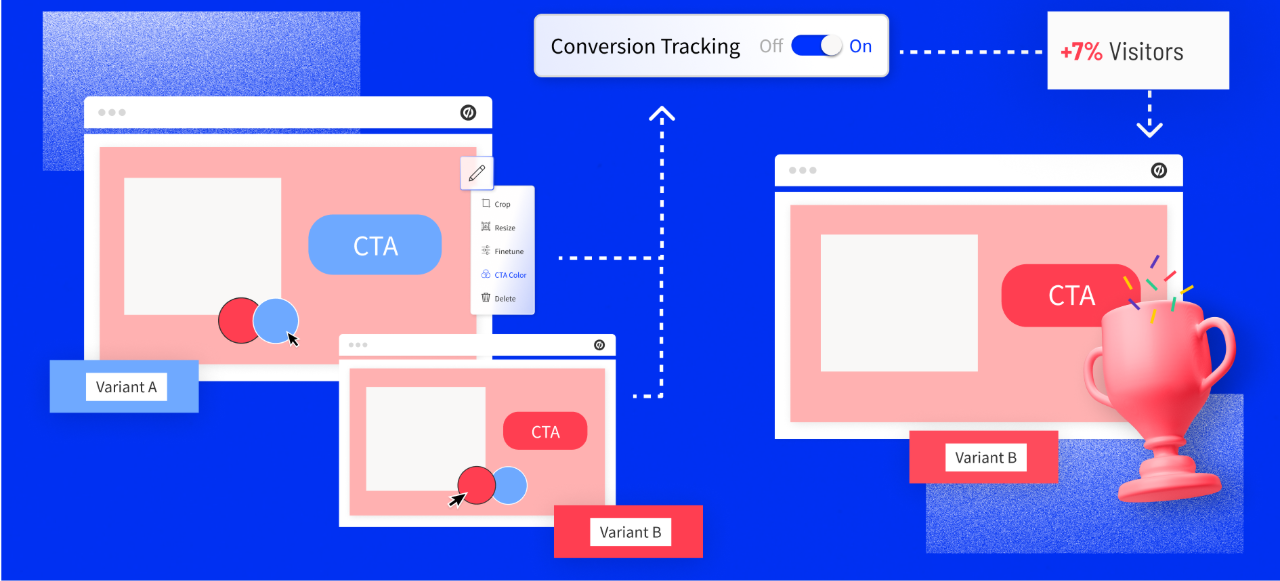

A/B testing is the process of comparing two versions of a page or an element on the page to determine which one performs better based on a defined goal.

In ecommerce, that goal often relates to revenue generation, such as increasing purchases, improving add-to-cart rates, reducing cart abandonment, or increasing average order value.

Version A is the current version, and version B includes one specific change. Traffic is split between the two versions, and performance is measured over time. At the end of the test, the team evaluates which version produced better results.

This method matters because ecommerce optimization is often full of opinions. Teams may disagree about whether a larger call to action button will help, whether shorter product descriptions convert better, or whether more trust signals near checkout will reduce friction.

A/B testing replaces these opinions with evidence. It allows teams to learn what works for their audience, in their store, under their conditions.

This is especially important in ecommerce CRO because small improvements can create meaningful business results. A higher conversion rate means more revenue from the same traffic source.

If a store already invests in paid media, SEO, email, or social acquisition, improving conversion makes every traffic channel more efficient. It is one of the most practical ways to improve performance without simply increasing ad spend.

How A/B Testing Improves Conversion Rates

A/B testing improves conversion rates by identifying which version of a shopping experience helps users move forward more easily. In some cases, that means reducing confusion.

In others, it means strengthening trust, clarifying product value, or making the next step more obvious.

For example, a product page may be receiving traffic but underperforming in add-to-cart rate. The issue might not be the product itself. The problem may be that:

- The product images do not clearly demonstrate how the product is used

- The copy does not address common objections

- The layout pushes important information too far down the page

An A/B test helps isolate changes and evaluate whether they improve performance.

The same principle applies across the funnel. A landing page may attract visitors but fail to generate intent. A checkout page may lose users because it feels too long or uncertain. A/B testing helps teams identify these friction points and improve them step by step.

Over time, this creates a more disciplined approach to ecommerce CRO. Instead of redesigning pages based on trends or personal taste, teams build an optimization process around actual user behavior and A/B testing best practices.

This usually leads to better decisions, more efficient testing cycles, and stronger long-term growth.

What You Should Test in an Ecommerce Store

Not every element in a store has the same impact on conversion. The best testing opportunities are usually found in high-intent areas where users are closer to making a purchase.

These include product detail pages, cart flows, checkout steps, and landing pages built for paid or promotional traffic.

CTAs and Purchase Actions

Call to action elements are some of the most common testing areas in ecommerce because they directly influence user movement through the buying journey.

A store can test button text, size, placement, spacing, and visual prominence. In some cases, a more direct phrase improves performance. In others, a softer or clearer instruction works better.

The goal is not to find a universally perfect button, but to understand which version helps this audience take action more confidently.

A product with a stronger impulse-buy profile may respond well to more immediate action language, while a higher-consideration product may benefit from language that feels more reassuring.

Product Images and Videos

Visual presentation is often central to conversion in ecommerce because customers cannot physically inspect the product.

A/B testing in ecommerce allows teams to compare different photo styles, image order, zoom options, lifestyle images, demonstration videos, or close-up detail shots.

The most effective visual setup depends on the product category and the stage of decision-making. Apparel shoppers may need more fit and usage context, while a supplement brand may need visuals that support clarity and trust.

Testing helps determine which combination better supports understanding and purchase intent.

Product Descriptions and Copy

Product copy plays a critical role in ecommerce conversion because it shapes how customers perceive value, relevance, and trust. While some audiences prefer concise, easy-to-scan descriptions, others need more detailed information before they feel confident enough to buy.

In addition to influencing user decisions, well-structured product copy also supports SEO by aligning with relevant search intent and keywords, helping attract more qualified traffic to the page.

A/B testing in ecommerce helps identify which type of messaging resonates most by comparing different approaches, such as:

- Short vs. detailed product descriptions

- Feature-led vs. benefit-led copy

- Variations in product titles and keyword positioning

- Stronger objection handling (e.g., addressing doubts, risks, or misconceptions)

- Different ways of structuring claims and key information on the page

The goal is not simply to change wording, but to understand what makes the product easier to evaluate and more compelling to purchase.

Page Layout and UX Structure

The layout of a page changes how users process information. The order of elements, the spacing between sections, the visibility of important details, and the overall flow of the page all influence behavior.

A/B tests can evaluate different structures for the product page, changes in content hierarchy, repositioning of reviews, or adjustments to mobile layouts.

A good ecommerce UX optimization test focuses on improving the shopping path. It ensures users can find the right information faster and move forward with more confidence, which typically leads to higher conversion rates.

A strong mobile ecommerce UX optimization test focuses on improving the shopping path rather than just making the page look cleaner. When users find the right information faster and feel more certain about what to do next, conversion usually improves.

Trust Elements and Social Proof

Customers often hesitate because they are unsure whether the product will work, whether the brand is credible, or whether the purchase experience will be safe. This makes trust signals highly relevant for A/B testing.

A store can test customer reviews, testimonial placement, return policy visibility, secure payment messaging, shipping clarity, product guarantees, or user-generated content.

These elements can reduce perceived risk and help customers move from interest to action with more confidence.

How to Run A/B Tests in Ecommerce

Running effective A/B tests requires more than choosing an element and changing it.

Strong tests begin with a clear question and follow a structured process that connects experimentation to business outcomes.

Start With a Clear Hypothesis

Every useful test should begin with a hypothesis grounded in data or observation. Instead of saying that the team wants to test a new product title, it is better to define why that change may improve performance.

A stronger approach would be to note that users are landing on the page but not scrolling enough to reach deeper product details.

Based on that behavior, the team may hypothesize that a clearer and more benefit-focused title near the top of the page will improve engagement and increase add-to-cart rate.

This matters because hypotheses make tests easier to interpret. If the team knows what behavior it expects to change and why, the analysis becomes more useful after the test ends.

Choose One Meaningful Variable

A/B testing works best when the difference between the two versions is clear and controlled. If the team changes the button text, the image set, and the product copy all at the same time, it becomes difficult to know which change caused the result.

For this reason, it is usually better to test one meaningful variable per experiment, especially when the goal is learning rather than simply shipping a redesigned page.

This allows the business to build a reliable knowledge base over time.

There are situations where broader page tests make sense, especially when redesigning a section with several related elements, but even then the team should be aware that interpretation becomes less precise.

Segment Traffic Properly

A/B tests depend on fair comparison.

Traffic should be split evenly between versions, and the audience should be similar enough that the result reflects the variation rather than a distortion in traffic quality.

This is particularly important in ecommerce because user intent varies by device, source, geography, and campaign. A test may perform well on desktop and poorly on mobile, or may behave differently for returning visitors than for first-time users.

Segmenting and reviewing results by relevant audience groups helps prevent incorrect conclusions.

Run the Test Long Enough

One of the most common A/B testing mistakes is ending a test too early. Early results can be misleading because short-term fluctuations are normal, especially when sample sizes are small or traffic quality changes from day to day.

A test should run long enough to collect enough data for meaningful interpretation. The exact duration depends on traffic volume and baseline conversion rate, but the general principle is simple.

Do not make decisions based on unstable data. Reliable testing requires patience and enough volume to distinguish real performance differences from noise.

Track the Right Metrics

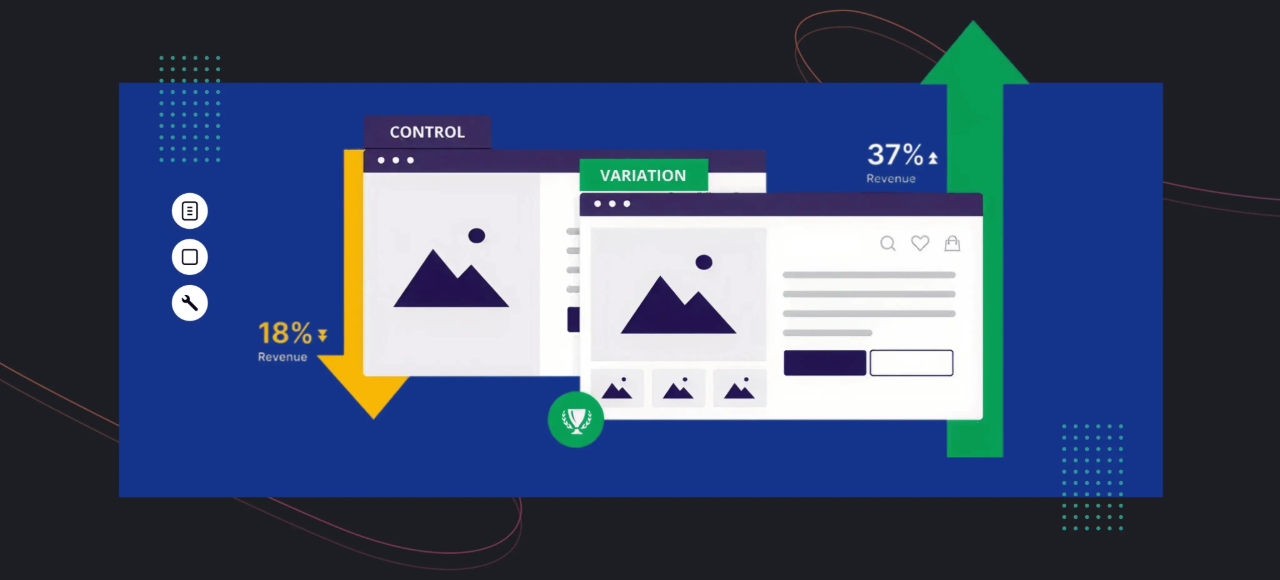

Conversion rate is usually the main metric in ecommerce A/B testing, but it should not be the only one reviewed. A variation may improve clicks on a button while reducing completed purchases, or it may raise conversion rate while lowering average order value.

For this reason, teams should define a primary metric and review supporting metrics as part of the analysis.

Depending on the goal, relevant indicators may include add-to-cart rate, checkout completion rate, bounce rate, revenue per visitor, average order value, and abandonment rate.

A test should be evaluated in context. A surface-level improvement is only useful if it supports overall business performance.

How to Analyze A/B Test Results and Make Decisions

Once a test has collected enough data, the next step is to interpret what happened and decide what to do with the result. This stage is where many teams either extract valuable insight or reduce the test to a simplistic win or loss.

The first question is whether one variation clearly outperformed the other on the primary metric. If the answer is yes, the team can consider implementing the stronger version. If the answer is no, that does not mean the test failed.

A neutral result still teaches the business something about user behavior and can help refine future tests.

It is also important to look beyond the final conversion number. For example:

- A higher click-through rate with lower order completion may indicate increased interest without sufficient support later in the journey

- A drop in time on page combined with higher conversion may suggest improved clarity and efficiency rather than lower engagement

This is why analysis should connect behavior to outcomes. The team should ask what changed in the user journey, why that change may have happened, and what it suggests for the next iteration.

After the analysis, useful insights should be documented and testing becomes more valuable when learnings accumulate.

Over time, the business builds a clearer understanding of what its audience responds to, which objections matter most, and which page structures consistently support conversion.

Using A/B Testing to Optimize the Ecommerce Funnel

A/B testing is especially effective when it is applied to the full purchase journey rather than isolated page elements. Different stages of the funnel involve different questions and friction points, which means they require different testing priorities.

Product Pages

Product detail pages often have the greatest testing potential because they are the point where interest turns into buying intent. Shoppers arrive here with questions about value, relevance, quality, and trust.

Good testing on the product page focuses on helping users answer those questions more easily.

This can include testing image order, title structure, benefit presentation, review placement, shipping information visibility, sticky add-to-cart elements, and mobile content hierarchy.

Since these pages sit close to the conversion event, even modest gains here can have strong revenue impact.

Checkout Flow

Checkout is where many stores lose ready-to-buy users. A/B testing at this stage should focus on reducing friction and uncertainty.

The team may test guest checkout options, form length, field order, progress indicators, payment messaging, shipping clarity, and error handling.

The purpose is to make completion feel easy and trustworthy. In many cases, checkout optimization is not about persuasion as much as it is about removing obstacles.

Users who have already decided to buy often abandon because the process feels too slow, too confusing, or too risky.

Landing Pages

Ecommerce landing pages are another important area for testing, especially when they support paid campaigns, product launches, or promotional offers.

Here the main question is whether the page matches user expectations and moves visitors toward the next action.

Useful tests may involve headline framing, page structure, hero section copy, proof placement, offer presentation, or the balance between information and simplicity.

Because landing pages often shape first impressions, improvements here can strengthen the quality of traffic entering the rest of the funnel.

A/B Testing Best Practices for Continuous Growth

A/B testing is most effective when it is treated as an ongoing optimization system rather than a one-time tactic.

Ecommerce stores change over time, customer expectations evolve, and what worked six months ago may not work the same way today. This is why the strongest teams build testing into their normal decision-making process.

One best practice is to prioritize high-impact areas first. Instead of testing low-traffic sections with limited commercial value, teams should begin where purchase intent is strongest.

Product pages, cart steps, checkout pages, and offer-focused landing pages usually provide the most meaningful opportunities.

Another important practice is to build tests around real business questions. The best experiments are not random changes. They are structured attempts to understand what improves customer decision-making and store performance.

Documentation also matters. Teams should record what was tested, why it was tested, what happened, and what was learned. This helps prevent repeated mistakes and creates stronger foundations for future experiments.

Finally, continuous iteration is essential. A/B testing does not produce one final version of a perfect page. It supports ongoing refinement. Each result should inform the next hypothesis, and each learning should move the store toward a more effective customer experience.

Common A/B Testing Mistakes That Hurt Conversion Rates

Many ecommerce brands test regularly but still fail to improve performance because the testing process itself is weak. Several mistakes appear frequently and reduce the value of experimentation.

Common issues include:

- Testing without a strong hypothesis

When tests are based only on preference or curiosity, results become harder to interpret and less useful for future optimization. - Ending tests too early

Early performance lifts often disappear as more data is collected, leading to premature and incorrect decisions. - Testing too many variables at once

Bundling multiple changes makes it difficult to isolate what actually influenced the result, reducing learning. - Focusing on weak metrics instead of business outcomes

Increases in clicks or engagement may look positive, but if they do not improve purchases, revenue per visitor, or other key metrics, they may not drive real impact. - Treating testing as a disconnected activity

A/B testing is most effective when integrated into a broader ecommerce CRO strategy, supported by user behavior analysis and funnel insights.

Conclusion

A/B testing helps ecommerce brands improve conversion rates by replacing assumptions with evidence. It gives teams a way to evaluate changes in copy, design, UX, and checkout flow based on real customer behavior rather than internal opinion.

When applied thoughtfully, A/B testing supports better product pages, stronger landing pages, smoother checkout experiences, and more efficient funnels overall.

It also creates a more disciplined optimization process, where each test adds to the store’s understanding of what drives purchases.

The most important point is that A/B testing should not be treated as a one-off tactic. It is most valuable when used continuously, with clear hypotheses, strong measurement, and a focus on meaningful business outcomes.

For ecommerce brands that want to improve conversion in a structured and sustainable way, it remains one of the most practical tools available.